Discover how hireful’s AI shortlisting feature is intentionally designed to minimise bias in recruitment—through transparent criteria, structured guidance, ongoing monitoring, and practical training—while keeping recruiters in full control.

How we minimise bias with our AI Shortlisting feature

Background

You don’t have to look far to find conversations—on LinkedIn, in industry circles, or in the media—highlighting the risks of bias within artificial intelligence, especially when AI is applied to recruitment. A well-known example from the BBC News website, describes how Amazon abandoned an internal hiring tool all the way back in 2016 after discovering it was biased against female candidates: BBC article.

This kind of example understandably creates concern among HR professionals and candidates alike. And rightly so—if left unchecked, bias in recruitment AI doesn’t just damage candidate experience, it undermines fairness, diversity, and trust in the entire hiring process.

Most recruitment-focused AI tools on the market today are built to help identify and recommend the "top" candidates from a wider pool of applicants. On the surface, this seems helpful. But dig a little deeper, and you’ll find that the algorithms making these recommendations are often trained using historical data—data based on how similar candidates were treated in the past.

This is where the problem begins.

Why bias creeps in with the traditional AI shortlisting approach

AI trained on historical decision-making data inherits the patterns within it—including the biases. Here are just a few reasons why this approach can be problematic:

- Historical bias: If the past hiring decisions favoured certain demographics (intentionally or not), the AI will learn to mimic that pattern.

- Data quality issues: CVs or hiring outcomes used to train the AI may not reflect objective indicators of success but rather subjective, unrecorded or flawed judgements.

- Lack of transparency: Many models act as “black boxes”, making it difficult for HR teams to understand or explain how decisions are made—especially to candidates.

- Proxy discrimination: Even when protected characteristics like gender or ethnicity are excluded, the AI can still use correlated factors (e.g. name, location, school) as proxies, perpetuating unfair advantage or disadvantage.

These are not just technical issues—they are ethical concerns that impact real people’s lives and livelihoods. And they highlight why responsible AI development in recruitment must start from a place of intentional design, not just technical innovation.

Why we took a different approach

When we set out to develop our AI shortlisting feature, we made a deliberate choice to simplify both the technology and the goal. We asked ourselves a core question:

What is the recruiter really trying to do when shortlisting a CV?

The answer was clear. At this stage, recruiters aren’t making complex final decisions—they’re simply trying to find evidence that the applicant meets key requirements. For instance:

Does this person have a forklift truck licence? Is there evidence of that in the CV?

So rather than building a model that tries to predict or replicate previous hiring outcomes, we asked the AI to do something simpler, more transparent, and—critically—less prone to bias.

Our AI doesn’t decide who is best. It simply helps answer factual questions based on anonymised CV content and pre-defined criteria. The recruiter is still in control, setting the criteria and making the decisions.

Why we talk about minimising—not eliminating—bias

You might be asking: If bias in recruitment is such a known issue, why not aim to eliminate it altogether?

It’s a fair question, and one we want to answer clearly and honestly.

The reality is, while we are deeply committed to reducing bias, we believe it would be irresponsible and inaccurate to claim we can eliminate it entirely. No recruitment process—human-led or AI-supported—can be entirely free from bias.

That’s why we talk about minimising bias rather than eliminating it. This isn’t about aiming low—it’s about being realistic, transparent, and ethical in what we claim. Minimising bias means acknowledging the challenge, then doing everything we can to reduce it as far as possible.

We can confidently say that our AI shortlisting feature helps reduce bias when compared to a typical human-led process. To illustrate this, let’s look at a common recruitment scenario where human bias can easily creep in.

Why human shortlisting is vulnerable to bias

Consider a common scenario in recruitment:

- A recruiter has 200 CVs to review in a single morning.

- By CV 30, fatigue starts to affect focus and decision-making.

- If six strong candidates appear early, are the rest reviewed with the same level of attention and rigour?

- Different vacancies have different requirements, often added by hiring managers with varying levels of experience and clarity.

- Then there’s unconscious bias—snap judgements based on names, education, work gaps, or even formatting preferences.

These are not rare or extreme situations. They’re everyday realities. And while structured processes and training help, they still leave plenty of room for human inconsistency.

How our AI shortlisting helps to minimise bias

When we designed our AI shortlisting feature, we set out to reduce these risks by creating a system that is structured, consistent, and easy to understand. Here's how our approach helps to minimise bias:

- System design

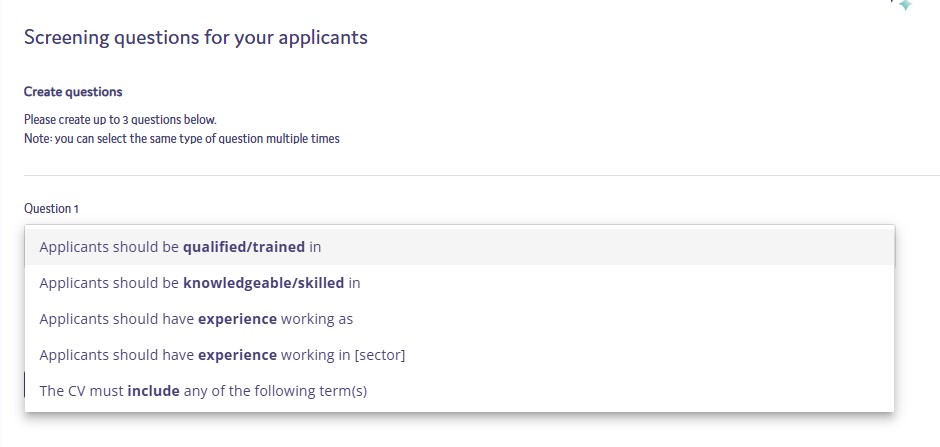

Using AI shortlisting is going to be a new experience for our customers. So we’ve designed this feature into our ATS so that it guides the user to add high-quality screening criteria.

We have seen other systems that just offer up a “prompt box” for the user to add instructions for the AI to use for shortlisting. This is similar to what users see when using ChatGPT where your range of possible tasks to ask ChatGPT for help with is limitless.

However, with AI shortlisting the task, as we see it, it's pretty fixed. Review the applicant’s CV and see if there is evidence that they meet the criteria. If you provide an open text box, you risk confusing users, and you also increase the chances of bias creeping into your process.

We have looked to offer the first half of the criteria to the user; they then just need to select the more relevant question starter and add the extra context to it. This process helps guide them to confirm up to 3 relevant criteria for the AI shortlisting to use to score applicants against.

- Training

We’ve gone beyond the standard “here is the instructions on how to turn on this feature”. We see ourselves as responsible for ensuring you and your colleagues understand how this feature works and how you should and should not be using it.

With this in mind, we’ve created the following training resources/guides:

- How our AI shortlisting works: Read more here

- Examples and guidance on crafting effective AI shortlisting questions: Read more here

- Using AI Shortlisting: Guidance for EU-based roles and applicants: Read more here

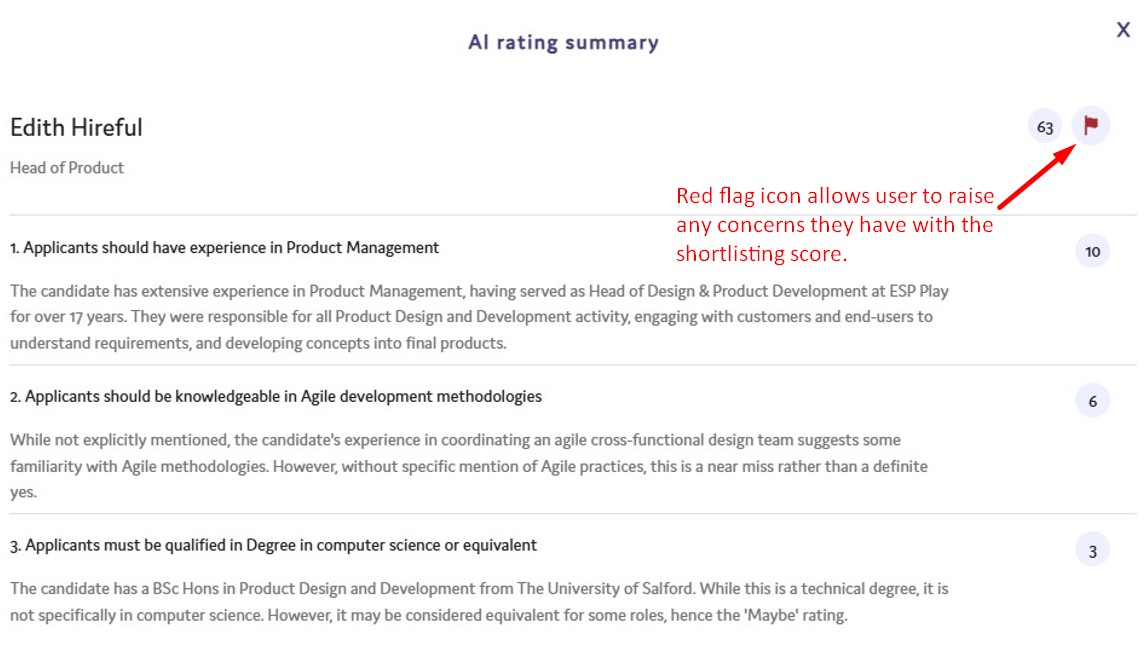

- Monitoring

We have our own internal team of recruitment consultants who have been using this feature in advance of the customer release and they continue to use it every day. They have received extra training to raise their knowledge of how this AI shortlisting works and they are a frontline resource for the product team to report back any areas of concerns or limitations of the feature.

Beyond our internal team, we are encouraging customers to “flag” any shortlisting results that they are concerned about. Each of these will be looked into by our technical support team, and fedback to our clients.

We will also audit on a monthly basis the screening criteria every customer is using and look to provide feedback and offer additional training where we think it might help customers to use this feature more confidently.

The process of minimising bias is an ongoing one. We are not arrogant enough to believe that we have the perfect solution. We will closely watch the data that shows us how this feature is being used and talk with customers about ideas they have on how we can improve it.

This blog post will be updated periodically as we add in additional safeguards and have additional statistics and information to share.

Ready to find out more about hireful’s ATS and our AI shortlisting?

You can read about our AI shortlisting feature here.

When you are ready, feel free to contact our team to discuss how this could reduce bias and streamline your recruitment process.

Is it legal to use AI for shortlisting candidates in the UK?

Yes, using AI for shortlisting is legal in the UK, provided you comply with data protection laws like the UK GDPR. The key is ensuring that the AI tool supports decision-making rather than making "solely automated decisions" without human involvement. You must also be transparent with candidates about how their data is being processed. This means it is good practice to update your privacy policy to ensure that it explains your AI usage in processing candidate data.

Can candidates challenge an AI-generated rejection?

Yes, candidates have the right to question how their data was handled. To stay compliant and fair, you should provide a feedback channel (such as a dedicated email address) where candidates can raise concerns. If challenged, you must be able to explain the logic behind the decision. It is therefore important to both understand how the AI tools you are using, actually work and how they ensure fairness. As well as, being able to explain this to any candidate in an easy to understand way. This explanation to candidates was one of the main reasons we decided to write the above blog in the hope that hireful ATS customers would be able to share this with candidates to explain how their AI works and how it ensures fairness.

.png)

%20(1).svg)

.png)