A practical guide to ATS selection criteria for SMEs, including weighted scoring, demo questions and decision thresholds.

ATS selection criteria for SMEs should focus on business outcomes rather than feature lists. The right evaluation framework measures workflow fit, adoption, candidate experience, compliance, data utility and future readiness. This article provides a structured scoring model small businesses can use to evaluate and choose an applicant tracking system with confidence.

Most SMEs evaluate ATS options by comparing feature lists and choosing on price or interface familiarity. I understand why. I have been on those MS Teams calls. I get it, it feels logical.

The problem is that feature lists tell you what a vendor has built, not whether the system will fix your hiring bottlenecks or actually be used by your team. A demo showing 47 integrations and AI-powered matching proves capability. It does not prove relevance.

We recently demo'd our ATS to a medium sized law firm who were looking at their first ATS. They had seen several other ATS before us. It was only during our demo, as we knew that their "fee earning" roles (lawyers, partners etc) would be sourced mostly by recruitment agencies that we asked them if they would like us to show them how our ATS supports employers working with recruitment agencies.

No other ATS provider had shown them this scenario/workflow. Not because they were not able to support this workflow but the salesperson and potentially the customer were happy just seeing the cool shiny features and getting excited about their new ATS. If they had proceeded forward without confirming that their agencies would be supported they risked having only their direct applicants in the ATS and agency submissions floating around in mailboxes.

The framework below is what we use at hireful when speaking with prospective customers. It shifts the conversation from 'what does it include?' to 'what does it enable?' and gives SME buyers a measurable way to make a defensible decision.

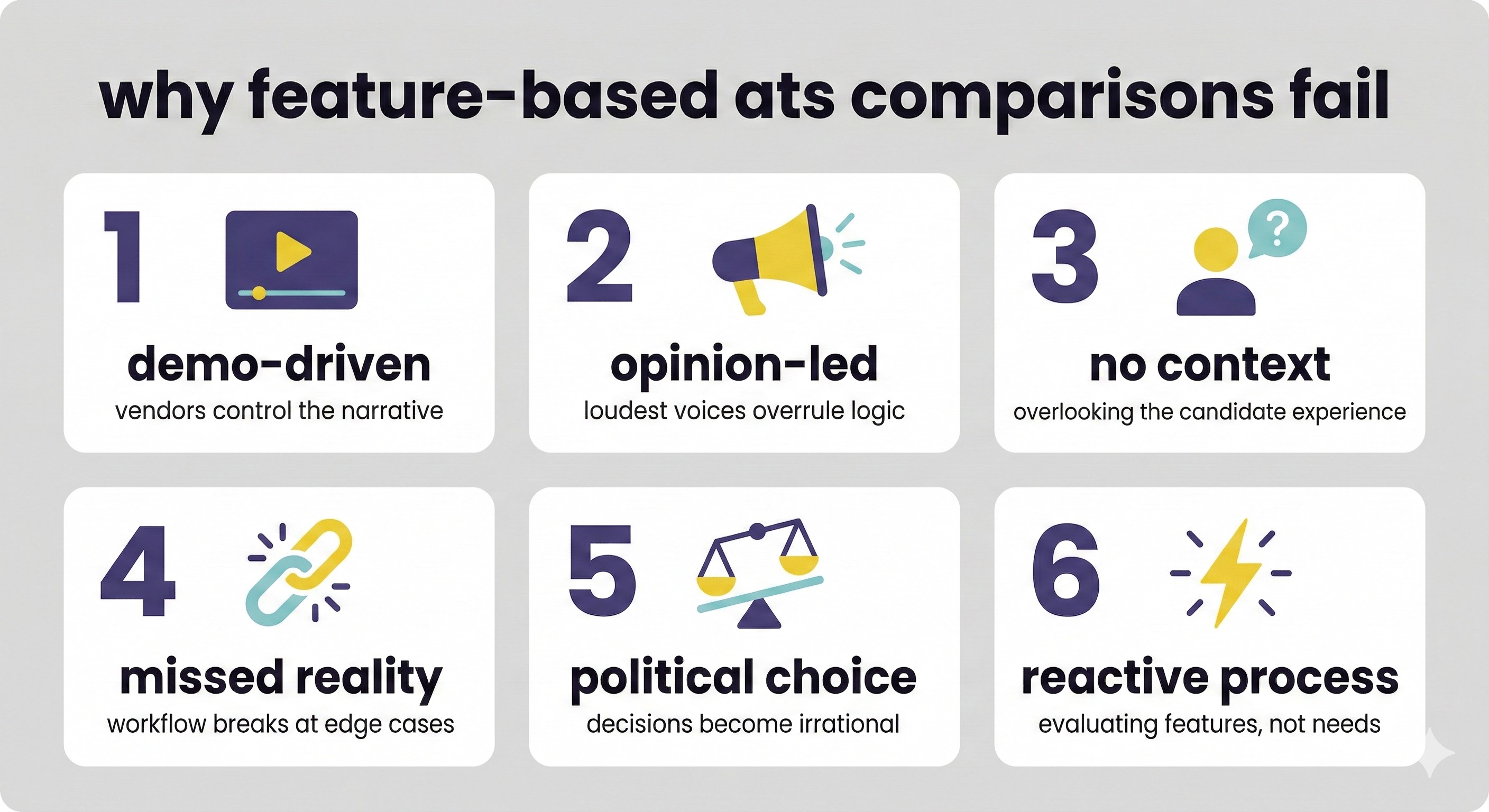

Why feature-based ATS comparisons fail SMEs

It is worth explaining why the standard approach leads to poor decisions. There are three specific failure modes that trap SME buyers into selecting the wrong ATS.

Failure mode 1: demo-driven decisions. Vendors control the narrative in demos. Every system looks capable when shown by someone who knows it well. The demo showcases strengths and glosses over the edge cases where the workflow breaks. You leave with the impression it looks good, but you have not tested it against the scenarios that actually matter to your organisation.

Failure mode 2: Without shared evaluation criteria, the decision defaults to whoever has the strongest opinion or loudest voice, not whoever has the best analysis. The recruiter loves the AI shortlisting feature. The hiring manager just wants something easy to use. Finance is confused why it costs more than their Xero licensing. Often the decision becomes political rather than rational.

Failure mode 3: features without context. A feature that solves a low-volume recruiter challenge in a cool way might leave a greater impression on the recruiter users who are choosing the solution. But the users not in the room, such as hiring managers and, importantly, candidates, can have their requirements overlooked. A feature list grows longer during the demo process as you see new capabilities you had not considered. This alone should tell you your process is flawed. If your list keeps growing every time you see a demo, you are reacting, not evaluating.

Feature lists end up being an ever-growing collection of ideas gathered from a range of internal stakeholders. The longer the list, the less useful it becomes as an evaluation tool.

Most ATS comparison guides focus on feature checklists. Effective ATS selection criteria for SMEs focus instead on measurable business impact, adoption risk and operational reliability.

The hireful ATS Capability Assessment: six dimensions that actually matter. We assess each ATS across six outcome dimensions, scoring each one from 1 to 5.

What differentiates this from a simple scorecard is the weighting step. We avoided equal weighting because it would obscure the real differences between dimensions. In practice, some areas are business-critical and others are not, and your scoring model should reflect that. In reality, compliance confidence might be business-critical for an NFP organisation operating safer recruitment checks, while data utility may matter less. Conversely, a fast-growth tech startup might assign a weighting of 2.0 to future readiness and 0.5 to compliance confidence.

The weighting step helps you clarify what truly matters to your organisation before you walk into a demo.

How the weighted scoring system works

Before evaluating any vendor, assign a weighting to each dimension based on its importance to your organisation. Use the following scale:

• 1.5 – High importance: a gap in this area would seriously hinder adoption or outcomes.

• 1.0 – Standard importance: this matters, but it is not decisive on its own.

• 0.5 – Nice to have: helpful, but not essential to your hiring process.

Add up your weightings first. In this example: 2.0 + 1.5 + 1.0 + 2.0 + 0.5 + 1.0 = 8.0

8.0 × 5 = 40

Your maximum possible score is 40.

Set your minimum threshold to 60 percent of that total. Sixty percent of 40 equals 24.

Any vendor scoring below 24 does not meet your stated priorities, regardless of how persuasive the demo felt.

The six dimensions

hireful was built with these six areas in mind for organisations between 20 and 2,000 staff.

More importantly, the value of this approach is not about favouring one vendor. It gives you a clear way to compare any system against your own priorities.

Dimension 1: workflow fit

The ATS should map to your existing hiring process, or to the process you want to build, not force you to adapt your process to the software's assumptions. The roll out of your ATS with a strong workflow fit can be an opportunity to tighten up your processes. Reducing the potential for hiring managers to reach out to the HR team with the CV of a candidate they have already interviewed from an agency, for a vacancy that no one in the business was even aware of.

But a “strong workflow fit” does not have to mean fixed and rigid. An ATS with a fixed workflow might work perfectly for a high-volume call centre recruiter but be completely unworkable for a professional services firm hiring 15 specialist roles per year.

Example "workflow fit" questions to ask ATS vendors during your demo

• How easy is it to build a new recruitment workflow or update an existing one?

• Is there guidance available to help design the right workflow during and after implementation?

• Can we have different workflows running for different roles at the same time?

• How does the system support vacancies where we have a different process for internal candidates?

• How about roles where recruitment agencies are going to support us? How do we include them in the process?

• How will the system ensure our recruitment agency suppliers are not providing applicants who have already previously applied directly? We do not want to have to pay a fee for an applicant who is already in our system.

• Can the recruitment workflow support our vacancy approval process in all its complexity?

• Can the workflow publish the vacancy to all of the key channels and websites that we source applicants via?

• Can we integrate our HR system into the workflow process so that the hired applicant can be easily converted into a new employee record in our HR system?

• Can we integrate other third-party providers who we use for assessments, screening, background checking or similar?

• Can you show me how this handles a role where the hiring manager is not in the office?

• Can you show me something going wrong in the workflow and how you fix it? For example, a candidate stuck at the wrong stage or an automated email that fired at the wrong time.

• If we described our exact hiring process step by step, how long would it take to implement it in the system before we go live?

Workflow Fit – Scoring criteria and interpretation

Give 1 point when the workflow is fixed and cannot be adapted to your process.

Give 5 points when it is fully configurable without developer input and can support multiple workflows running at the same time.

The vendor should demonstrate this during the demo, not simply claim it is possible.

Dimension 2: adoption realism

The most common reason ATS investment fails in SMEs is not a missing feature. It is underuse.

Early enthusiasm is real. Training happens. People log in. Then daily work takes over. I have seen this more times than I can count. Features that looked promising in the demo sit untouched. Updates are released and quietly ignored.

By the time renewal comes around, someone says, 'Yeah, we are looking to leave because your system does not do A, B and C', even though it has done those things for years.

That gap is rarely about capability alone. It is about design, communication and how actively the vendor supports adoption. The real question is simple: will your team continue to use the system correctly six months after launch?

Example "adoption realism" questions to ask ATS vendors during your demo

• How do you onboard a team our size?

• How do you support new recruiters who join after launch?

• What format does your training programme take: live sessions, recorded videos, written guides or a mix?

• How easy is it for a hiring manager to log in, access their applicants and make decisions?

• Can you show me the hiring manager view specifically, and walk me through what they would do on a typical day they have a candidate to review?

• Number of clicks required to review a shortlist and provide feedback

• Ease of re-entry after three weeks without logging in

• How do you keep us aware of new features and updates?

• If you release a significant new feature, do customers have to find out themselves or do you proactively tell them?

• Do you have NPS scores for support and training that you can share?

• Do you proactively reach out if you see customers are not using key features?

• Can you show me a dashboard or report that tells me, as the account owner, how actively our team is using the system?

• What percentage of your SME customers have hiring managers logging in weekly?

• Can you give me an example of a feature that most SME customers underuse, and tell me why you think that happens?

Scoring guide for adoption realism

Understand what training and support is available. Ask yourself how complex the system is and how intuitive it feels to use, and whether you feel like, given the level of time and digital literacy or technical knowledge of your users (both recruiters and hiring managers), that they would be able to embrace a new system. Assign a score of 1 when the system appears complicated and training is insufficient.

Assign a score of 5 when the system is intuitive, training is comprehensive and ongoing, and the vendor can demonstrate strong adoption rates among SME customers.

Dimension 3: Candidate experience

Your ATS serves as a key interaction point in your employer brand. A clunky application process, slow automated responses or a broken mobile interface costs you candidates before you have even assessed them. The best ATS in the world is useless if qualified candidates abandon the application halfway through. Over time, more and more past applicants will "swerve" your vacancies if they have previously had a bad experience after they choose to apply.

Key demo questions for candidate experience

• Are you able to support multiple different application forms for different roles, based on the different profile of applicants expected to apply to each role? For instance, graduate applications you might ask for more information whereas more experienced hires you might want a streamlined process.

• Do you require candidates to create a username and password at the start of the application process?

• Can candidates easily apply for job alerts if they cannot see a suitable vacancy?

• Do you offer different application options when publishing to job boards and can these be chosen on a job-by-job basis? Some roles we might want to insist on all applicants completing our full application on our careers site and other roles we might be more willing to allow applicants to 'easily apply' without leaving their preferred job board.

• Are you able to support bespoke career sites that can help answer all the additional questions jobseekers might have?

• Are you able to support chatbots to also support jobseekers browsing your careers site and also after they apply?

• How do you support candidates at interview stage?

• How do you support us to build a supportive onboarding process?

• Can you show me the mobile application experience from a candidate's perspective?

Test suggestion

Put yourself in the shoes of your typical applicant. Are they time-rich or time-poor? Digitally literate or a novice? Apply for a vacancy yourself during the evaluation and time the process. Score 1 if you find it frustrating. Score 5 if it is faster and cleaner than applying via LinkedIn.

hireful's application process is designed to be completable on mobile in two to three minutes. We also support 'easy apply' methods, meaning applicants from major job boards like Indeed can complete their application easily on their preferred job board without leaving the job board.

Dimension 4: compliance confidence

For UK SMEs, GDPR candidate data retention, right to work audit trails and equal opportunities monitoring are legal obligations. They are not optional extras.

An ATS should reduce your compliance exposure. It should not leave your HR team building manual workarounds and hoping nothing slips through the cracks. That kind of risk keeps people awake at night.

Key demo questions for compliance

• How does the system handle candidate data deletion requests?

• How does the system help support us with subject access requests?

• Does the system limit the ability for users to download or email applicant details out of the system, thereby creating additional compliance risks for GDPR?

• Does it automatically flag retention period expiry dates?

• Is it possible to edit this retention period if we change policy?

• Does it produce an audit trail for each hiring decision?

• How do you help support us if a candidate complains that our process has not been fair?

• How does the system ensure that users have all the necessary permissions to conduct a right to work check?

• How does the system ensure that the right to work assessment is robust?

• How do you ensure that we are not collecting equal opportunities monitoring data where we do not have the correct permissions?

• How do you ensure that all applicants entering the system through all the various methods (applying internally, applying informally like emailing a mailbox, applying via recruitment agencies, applying via your career site or job board) have access to and have accepted your privacy policy?

• Can your new provider support you in designing a more compliant process and identifying and minimising compliance risks?

Scoring guide for compliance

Score 1 if the vendor cannot clearly explain how GDPR compliance is handled or if it requires manual intervention. Score 5 if the system automates compliance, produces clear audit trails and the vendor can demonstrate how they help customers design compliant processes. hireful's GDPR and compliance features are available to all customers regardless of what plan they are on and are very comprehensive and flexible.

Dimension 5: data utility

Most SMEs collect far more recruitment data than they ever use. An ATS can quietly become a filing cabinet if reporting is hard to access or too complex to interpret. Data utility is about whether the system turns activity into insight. Can you see patterns? Can you spot bottlenecks? Can you explain hiring performance to your board without building a spreadsheet from scratch?

If reporting feels like an afterthought, it probably is. This dimension tests whether the system helps you think better, not just record more.

Key demo questions for data utility

• Can you help us to build a workflow to ensure we capture all the data we need to report on?

• How easy is it to build bespoke reports?

• Can our internal data team or analyst pull our data into their reporting tools?

• How can you help us benchmark our recruitment performance?

• What additional options do you have to help us gather more data via surveys of key stakeholders at key moments in the recruiting process?

• How do you balance delivering a streamlined candidate experience with also capturing key data from applicants such as diversity data?

• How do you get this report data to us in an easy-to-understand and easy-to-access fashion?

• Can you show me the three most important recruitment metrics for an organisation like ours and how quickly I can access them in the system?

Scoring guide for data utility

Score 1 if reporting is basic and inflexible. Score 5 if the system gives you reports you actually use to make decisions, allows bespoke reporting, provides benchmark data and integrates with your existing business intelligence tools. At hireful, we have an extensive set of reports within our dashboards and continue to build on them. We provide benchmark data and can build bespoke reports, as well as give customers the ability to pull their data into their own business intelligence tools such as Power BI or Tableau.

Dimension 6: future ready

Choosing an ATS was already a long-term decision. AI has made it more so. If you are signing a three to five year contract, you need clarity on how the product will evolve.

Ask what AI features already exist and where they are used in the workflow. Ask what is planned over the next 12 to 24 months. Ask what parts of hiring the vendor believes should remain human-led.

It is important to know whether you can opt out of specific AI features, how decisions are audited, and how bias is tested. If the vendor cannot explain how its AI works in plain English, treat that as a red flag. It is the vendor’s responsibility to explain clearly how their AI operates and where it is used. If you still do not understand it after they have explained it, that is a failure of communication on their side, not yours.

Key demo questions for future readiness

• Which AI features are currently integrated into your product?

• What AI features are you planning to build in the near future?

• What do you see as the current limits of AI and what parts of the recruiting process do you believe that AI should not currently be involved in?

• How do you ensure that your organisation is staying abreast of all the latest features, updates, risks and challenges with this new technology?

• How do you help us communicate our use of your AI technology to candidates and also internal stakeholders like hiring managers?

• How do you ensure your AI is as bias-free as possible?

• Can you show me how one of your AI features works in practice and explain the logic behind it?

• If we are uncomfortable adopting a particular AI feature, can we opt out or delay implementation?

Scoring guide for future readiness

Score 1 if the vendor has no clear AI strategy or cannot explain how their AI works. Score 5 if the vendor is transparently building AI features, can explain how they work, shows how it designs against bias and allows flexible adoption at the customer's pace.

hireful takes a transparent approach to how we build AI technology. We go out of our way to communicate this in an easy-to-understand way for our customers and their customers (in this case candidates and internal stakeholders) to be able to understand how our AI is working and the value it brings, and how it is free of bias.

We take AI seriously. We also recognise that different customers adopt it at different speeds. You should understand how it works, determine where it is appropriate to use, and retain the option to disable it. If you would like to explore how it applies in your context, we are happy to discuss it.

Example case study - how to release an AI feature

When we launched our AI shortlisting feature, we knew our customers and more importantly their candidates would have lots of questions. We expected customers to be ask:

- How does this work?

- Is the AI making decisions

-How can we trust the AI to remove bias from the shortlisting process?

Communication is key so we drafted several blog posts, not just to educate our customers, but to also give them an easy to understand explanation that they can share with their candidates, when they ask questions.

We created supporting resources to answer these questions:

- Feature release overview: https://www.hireful.com/product-releases/ai-shortlisting

- How our AI shortlisting works: https://www.hireful.com/blog/how-our-ai-shortlisting-works

- How our AI shortlisting minimises bias: https://www.hireful.com/blog/how-we-minimise-bias-with-our-ai-shortlisting-feature

How to evaluate an ATS for an SME using this framework

The framework only works when applied through a structured process.

Assess your current hiring process across the six dimensions using a 1 to 5 scale before evaluating any vendors. Establish a baseline to identify weaknesses and set weightings for each dimension.

Give all vendors the same two or three hiring scenarios relevant to your organisation. A volume campaign role and a senior specialist role work well for most SMEs. Ask them to walk through how their system handles each scenario. This ensures you are comparing like with like rather than each vendor showing you their favourite features.

Score each vendor live during the demo. Use the 1 to 5 scale for each dimension during the demo, not afterwards from memory. Have one person responsible for capturing the scores and notes. If multiple stakeholders are present, agree the scores immediately after the demo while it is still fresh. Do not let each stakeholder score independently, this defeats the purpose of having shared evaluation logic.

Compare total weighted scores, not impressions. The vendor who scored highest across six dimensions after applying the weighting is your rational choice. If your gut disagrees with what the numbers say, identify which dimension is causing the conflict and investigate it further before deciding. The point of the framework is not to remove judgement, it is to make your judgement defensible.

How to assess the results from your ATS evaluation

If you have taken the time to set weightings, agree your key questions and define the scenarios, the scores should give you a clear direction.

In many cases, one vendor will pull ahead once the numbers are applied. The difference often becomes clear.

Decisions are rarely made in a vacuum: budgets shift, internal politics emerge, and stakeholders may raise concerns that did not surface during the demo.

If you choose a lower-scoring provider, document your reasoning. Be explicit about the trade-offs, for example faster screening versus reduced depth of assessment, or lower cost versus lower flexibility. Use the scoring model to inform your judgement, not override it.

To make this concrete, consider a 120-person professional services firm in the Midlands that hires around 18 people a year and has no in-house recruiter. The HR manager runs all hiring with support from hiring managers. They have previously used a basic ATS but adoption was poor and they reverted to email and spreadsheets within six months.

Based on this context, here is how they weighted the six dimensions:

• Adoption realism: 2.0 (business-critical, poor adoption killed their last ATS)

• Candidate experience: 1.0 (standard importance, they hire experienced professionals who will tolerate a reasonable process)

• Compliance confidence: 1.5 (high importance, they have had two GDPR subject access requests in the past year)

• Data utility: 0.5 (nice to have but not essential, they are not data-driven yet)

• Future ready: 1.0 (standard importance, they want to future-proof but it is not urgent)

Total possible score: 7.5 multiplied by 5 equals 37.5. The 60 percent threshold is 22.5 points.

They evaluated three vendors. Vendor A earned 26 points, 69 percent. Vendor B received 21 points, 56 percent, below the required threshold. Vendor C led with 28 points, 75 percent.

Vendor C emerged as the sensible selection. When the HR manager presented the decision to the finance director, she provided a written explanation demonstrating why Vendor C scored highest on the organisation’s key criteria. The decision was approved without debate, documented and scored, and clearly linked to the organisation’s priorities. That is what good ATS selection criteria look like in practice.

Frequently asked questions about ATS selection criteria for SMEs

What is the most important ATS selection criterion for a small business?

The most important criterion is the one that would stop hiring if it failed. For many SMEs, that is core applicant-tracking reliability. If the system does not make it easy for applicants to apply, can post jobs to the job boards you rely on, is not easy for hiring managers to access shortlisted applicants, then the hiring process stops. Identify that critical failure point in your process and test it rigorously during evaluation.

For some SMEs, that is adoption because they have struggled with usage before. For others, it is compliance because they operate in a regulated sector.

Identify the area that would cause real damage if it underperformed, and weight that at 2.0.

How many ATS systems should an SME evaluate before deciding?

Three is usually enough. Five is the upper limit before comparisons become noisy.

Oh, and do not fall into the trap that we often see of only looking at the recruitment module within your HR system. It feels convenient, but you should still compare it against at least two specialist ATS platforms so you understand what else is available.

What questions should I ask in an ATS demo?

Start with the structured questions from the six dimensions. Then step back and assess the company behind the product.

How do they support customers after implementation? How do they handle change requests? What does client retention look like?

And do not rely solely on vendor-supplied references. Even the weakest ATS providers can usually find two fairly satisfied customers. Instead, identify clients in your industry and try to speak with them directly if possible.

How do I build a business case for an ATS purchase?

To build a credible case, start with your audience: a finance director will want numbers. A managing director may care more about risk and long-term capability.

Be honest about the current cost of hiring. Time spent chasing hiring managers. Agency fees that creep up quietly. Revenue lost because roles sit open. Then show how the ATS changes that picture.

Support every claim with evidence. If your vendor cannot help you quantify the impact, that is a red flag. If you need help building the case, we are happy to support you.

What is a reasonable ATS implementation timeline for an SME?

We have implemented ATSs in a couple of days. In other cases, it has taken months. The difference is rarely the software. It is how quickly key decisions get made. We believe here at hireful that you do not need to implement the entire ATS in one go. That is absolutely possible and viable if that is what the customer wants, but it is rare that a customer would use every single part of the ATS straight away, so a phased implementation is likely the best solution. This is the quickest way to get that return on investment, and also you have planned in the next few phases so you can ensure they definitely happen.

Final thoughts Most SMEs replace their ATS the same way they chose the last one. They watch a few demos. They compare feature lists and then go with whatever "feels" like the best option.

A year later, the complaints sound familiar too. Adoption is patchy. The workflow does not quite fit. And you are already thinking about replacing it.

A weighted, scenario-based evaluation takes more effort at the start. It feels slower. It is slower. It forces clearer thinking and exposes trade-offs early. It also reduces the chance that you are back in the market again in two years.

If you want to test hireful against these six areas, bring your scenarios and scoring sheet to a demo. We will work through them with you and let the scores speak.

You can reach out to our team here to book in a demo.

.png)

%20(1).svg)

.png)